I’ve been leaning on AI a lot lately. It’s efficient. It gets the job done. But I’ve noticed something. When I let the machine do the heavy lifting, my brain turns off a little bit. I stop scrutinizing every word.

It turns out, I’m not alone. And that is exactly what has the Public Company Accounting Oversight Board (PCAOB) terrified.

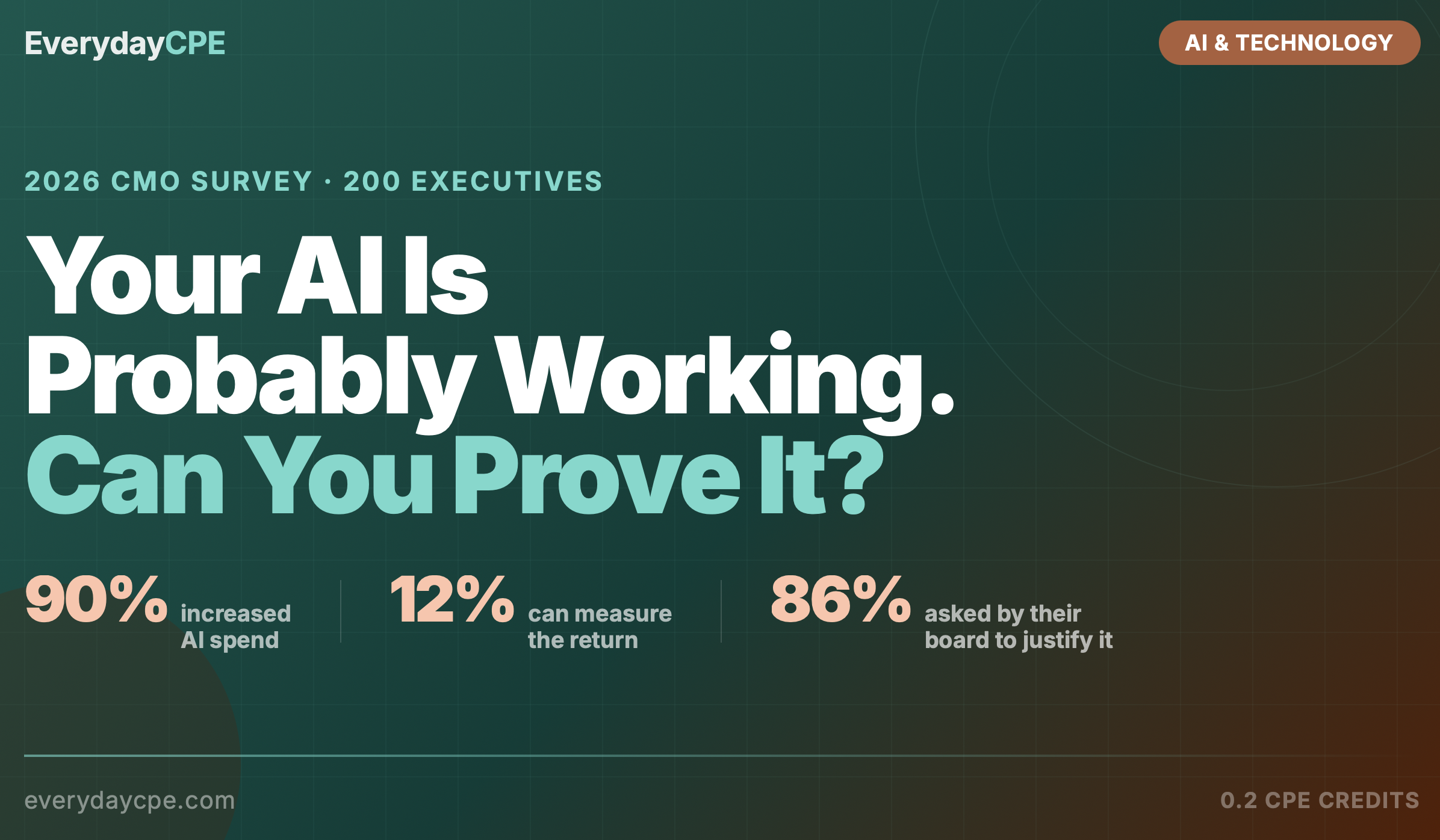

In my latest course on EverydayCPE I dug into the recent warnings issuing from the PCAOB regarding two massive trends reshaping our profession: Artificial Intelligence and Private Equity (PE).

Here is a look at what I found, why the regulators are stepping in, and what it means for the future of auditing.

The “Brain Off” Phenomenon

The PCAOB isn’t just guessing that AI makes us lazy. They have data.

During their recent conference, leadership pointed to an MIT study regarding Large Language Models (LLMs). The findings were stark: people using LLMs show reduced “cognitive engagement.”

In plain English? When you use AI, you stop thinking as hard. You don’t retain as much information. You don’t learn as deeply.

I looked through the transcript of the recent PCAOB discussions, and one statistic jumped out at me. During some of these trials, only about 27% of people using AI tools actively reviewed the results.

That means nearly three-quarters of users just took the AI’s output as gospel.

In auditing, that is a disaster waiting to happen. The core function of an auditor is professional skepticism—the duty to question everything. If we hand that duty over to a chatbot, we aren’t auditing; we’re just rubber-stamping.

The Private Equity Problem

The second pillar of the PCAOB’s warning involves the flood of Private Equity money entering accounting firms.

Traditionally, firms are partnerships. They play the long game. But PE operates on a different clock. They usually look for a 5-to-8-year exit strategy.

Here is the conflict:

- The Auditor’s Goal: Accuracy, independence, public trust.

- The PE Firm’s Goal: Maximizing cash flow and profits for a quick exit.

The fear is that short-term profit motives will lead to cost-cutting in audit quality. If you strip-mine a firm for efficiency to boost the valuation for a sale, the audit quality inevitably suffers.

Tinfoil Hat Corner: The Tangled Web

Let’s put on the tinfoil hat for a second.

The PCAOB raised a specific concern about independence that feels like a conspiracy thriller. There was a case involving a manufacturer in the Midwest where things got messy.

The accounting firm had an issue with the books. When regulators looked closer, they found a connection. The PE firm that sponsored the accounting firm also had an investment in the company being audited.

Even if there was no actual foul play, the perception is terrible.

We are moving toward a world of “fund of funds” and complex investment vehicles. It is getting harder to draw a straight line between owners and assets. If a PE giant owns a stake in the auditor and the client, how can we possibly claim independence?

I suspect we are going to see a major headline soon where an audit failure is directly traced back to this kind of incestuous ownership structure.

Moving From Sampling to 100% Analysis

It isn’t all doom and gloom. I am an optimist about technology.

For decades, we relied on sampling. We tested a fraction of the transactions and hoped they represented the whole.

AI gives us the ability to analyze 100% of journal entries, contracts, and invoices. That is a massive leap forward. We can spot anomalies that a human sampling 50 items would never find.

But the PCAOB was clear in their guidance: Technology-neutral standards are no longer enough.

They are starting to look “under the hood” of these AI tools while they are still in development. They aren’t waiting for the audit failure to happen; they are inspecting the algorithms now.

The Bottom Line: Human in the Loop

The regulator’s message is simple: The auditor, not the algorithm, is responsible.

“Human in the loop” can’t just be a buzzword we throw into a pitch deck. It has to be the operational reality. You cannot outsource your professional judgment to a server farm.

In the course, I walk through exactly how the PCAOB is planning to enforce this and what new regulations we can expect in 2025.

Key Takeaways

- Cognitive Decline: Studies show using AI reduces cognitive engagement. Auditors risk losing their critical thinking skills if they over-rely on tools.

- The 27% Rule: Data suggests a minority of users actively review AI outputs. This lack of skepticism is a primary regulatory concern.

- PE Incentives: The short-term profit models of Private Equity (5-8 year exits) conflict with the long-term stability required for audit quality.

- Independence Risks: Complex ownership structures are creating webs where auditors and clients may share the same PE investors.

- Proactive Regulation: The PCAOB is no longer waiting. They are inspecting AI tools during the pilot phase to ensure humans remain responsible for the final opinion.

Want to earn CPE for this topic?

- Compare Options: See how we stack up against others in our 2025 Flexible CPE Guide

- Understand the Format: Read how Nano-Learning works for CPAs.

- Check Your State: Ensure you are compliant with our State Requirements Guide.

- What is EverydayCPE?

Related Courses:

Latest Courses:

Responses

[…] The PCAOB is already sounding the alarm on the risks of AI in auditing. If your CPE provider isn’t teaching you about “The AI Insurance Gap” or “Algorithmic Bias,” you aren’t just missing out on skills—you are opening your firm up to new risks. […]

[…] you watch a breakdown of the PCAOB’s warning on AI, you are getting actionable intelligence you can use in your job today. When you take a Deep […]

[…] Regulators vs. Robots: Why the PCAOB is Sounding the Alarm on AI and Private Equity […]