I’ve been dealing with this one personally. An email lands from a senior leader — subject line sounds important. You open it and there’s a full memo, structured sections, confident language, maybe even an executive summary. Looks like someone did serious work.

So you start reading. And somewhere around paragraph three, something feels off. The assumptions don’t trace. The risk section is vague in a way that sounds precise. The recommendation doesn’t follow from the analysis. And then it clicks — this is AI output that got forwarded without much review.

Now you have a problem. Because the person who sent this thinks they contributed something. And they’re senior. So what do you actually do?

This Is the Other Side of the Workslop Problem

If you caught our earlier lesson on AI output etiquette, we covered the sender side — how to make sure you’re not the one shipping half-baked AI output dressed up as a deliverable. Researchers at Stanford and BetterUp have a name for it: workslop. AI-generated content that looks polished but lacks the substance to actually move the work forward.

This post is the harder problem. What do you do when workslop comes at you from someone above you? 40% of desk workers reported receiving it in the past month. And the frustrating part is that the sender almost never knows how it’s landing.

It’s Not a New Problem — Just a New Wrapper

Polish masking thin thinking has been around for decades. Think about the PowerPoint era. In the late 90s and 2000s, a 40-slide deck felt like proof that someone had done real work. The format became a proxy for rigor. Edward Tufte argued that this dynamic contributed to the Columbia Space Shuttle disaster — engineers had the right data about the foam strike risk, but it was buried in sub-bullets formatted in a way that made it easy to skim past. Decision-makers saw a polished briefing and walked away reassured. The presentation looked complete. The thinking was not.

AI has taken that same dynamic and turbocharged it. In the PowerPoint era, making something look polished still took time. That friction was a useful signal — if someone sent a well-structured document, they at least spent real hours on it. Effort and polish were correlated.

AI breaks that correlation completely. Think of it as the shrink-wrap effect. When software came in a physical box, the packaging signaled that what was inside had been built and tested. AI output is perpetual shrink-wrap — every document looks like it came out of the box ready to go. Whether the software inside actually works is a completely separate question.

Two Forces Making It Worse Right Now

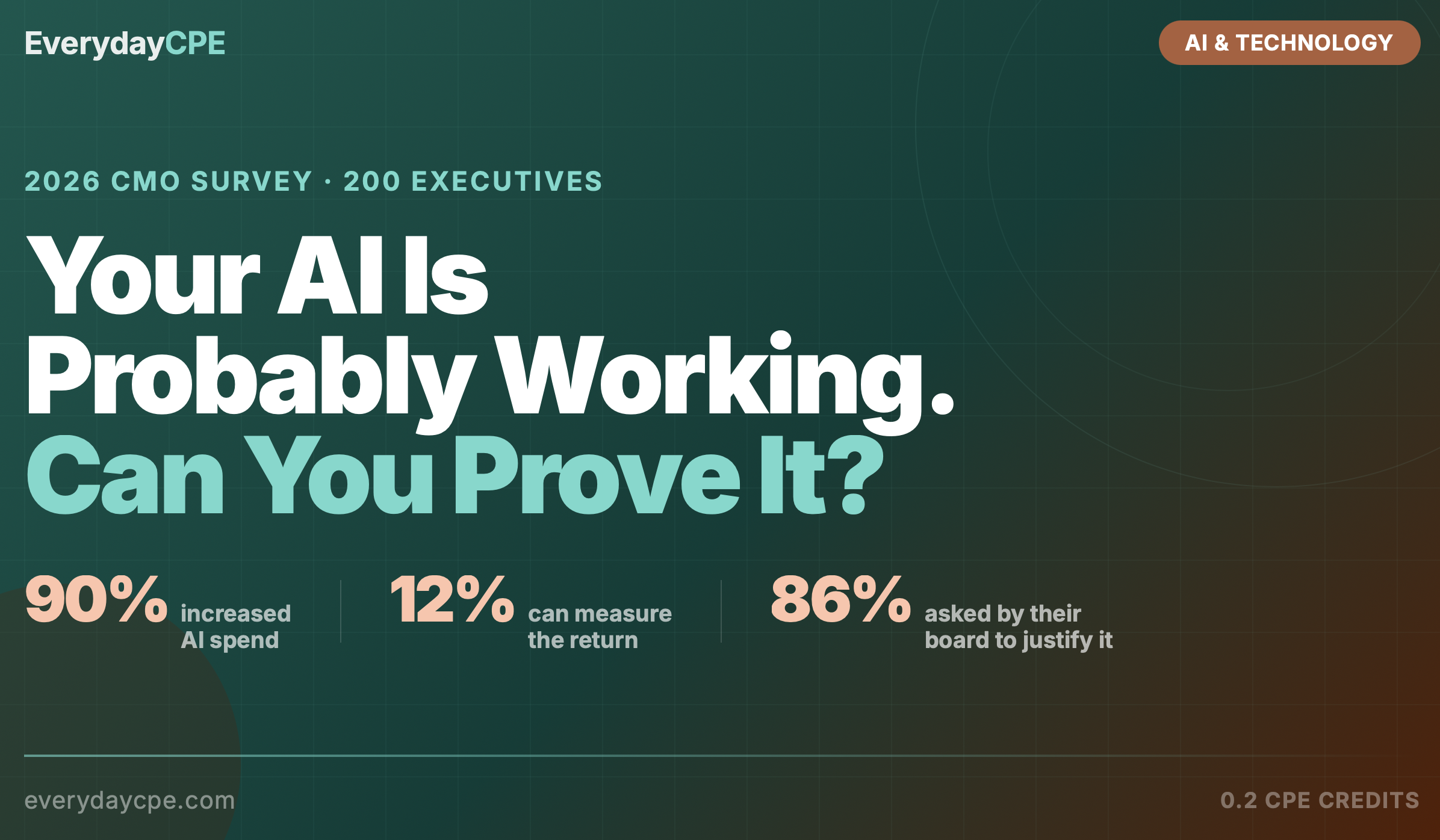

The first is what I’d call the enthusiasm gap. Organizations are actively pushing AI adoption. Leaders feel pressure to show they’re using the tools. The result is that some of the most confident AI output right now is coming from people who know enough to prompt but not enough to evaluate whether what came back is actually good. Stanford researchers estimated that roughly 15% of work being received right now is AI slop that needs correction — and in organizations where adoption is being mandated, I’d put that number higher.

The second force is the hidden reputation risk that senders don’t know about. Among people who received workslop, 54% viewed the sender as less creative, 42% viewed them as less trustworthy, and 37% viewed them as less intelligent. These numbers applied even when the sender was a senior person. Nobody’s telling them. So they keep sending it, and their reputation quietly erodes while the people downstream do the extra thinking to actually move the work forward.

The Curious Architect Approach

When workslop lands in your inbox from a senior leader, you have three bad options: let it go forward and hope it holds up, call it out bluntly and make it awkward, or absorb all the rework yourself and get none of the credit. None of those are great.

The fourth option is what I think of as the Curious Architect move. The core idea: ask questions that build toward the missing substance instead of exposing its absence. Treat the sender as someone who has more depth to share. Put the burden of filling the gap back where it belongs.

Instead of “this needs more work,” you say: “I want to make sure I understand the assumptions before we take this to the client — can you walk me through what’s driving the revenue number on page three?” That question doesn’t imply the number is wrong. It treats the sender as someone with more to offer. And it surfaces the gap without naming it.

A few other versions of the same move:

- “What’s the data source behind this assumption?”

- “How are we thinking about downside scenarios if this rate shifts?”

- “Which part of this do you want me to pressure-test before we send it?”

- “Can you walk me through how we got to this recommendation?”

Think of it like an audit. A good auditor doesn’t walk into a client’s office and say “I think you got this wrong.” They ask: “Can you walk me through how you got here?” Then they let the evidence do the work. Same principle. Same profession.

Two More Plays Worth Having

Share the reputational data — privately. In a one-on-one, as a genuine heads-up: “I read this research recently about how AI-polished work is actually landing with recipients, and thought it was worth sharing.” The 54/42/37 numbers land hard because they’re specific and most people are genuinely surprised. Nobody wants to be quietly viewed as less trustworthy by the people they work with.

Set the standard upstream. If you run a team or own a deliverable, define what “done” looks like before AI output starts circulating. Workslop thrives in ambiguity. When the expectation is clear upfront — sourced assumptions, defined recommendation, traceable logic — the AI-polished-but-hollow document doesn’t survive the first checkpoint.

Key Takeaways

- Workslop is a receiver problem as much as a sender problem. The person who sent it usually has no idea how it’s landing.

- Use the Curious Architect move — ask questions that build toward the missing substance without calling it out directly.

- The reputational data (54% less creative, 42% less trustworthy, 37% less intelligent) is a gift. Share it in the right context.

- Distinguish AI-assisted from AI-delegated. The goal isn’t to slow adoption — it’s to keep AI as a finishing tool, not a thinking replacement.

- Set the standard upstream. Ambiguity is where workslop hides.

Want the CPE credit? Take the full lesson on EverydayCPE and earn 0.2 CPE credits: [lesson link]

Leave a Reply